AI-Ready Infrastructure, Delivered Globally.

About us

Innovative IT Solutions Provider

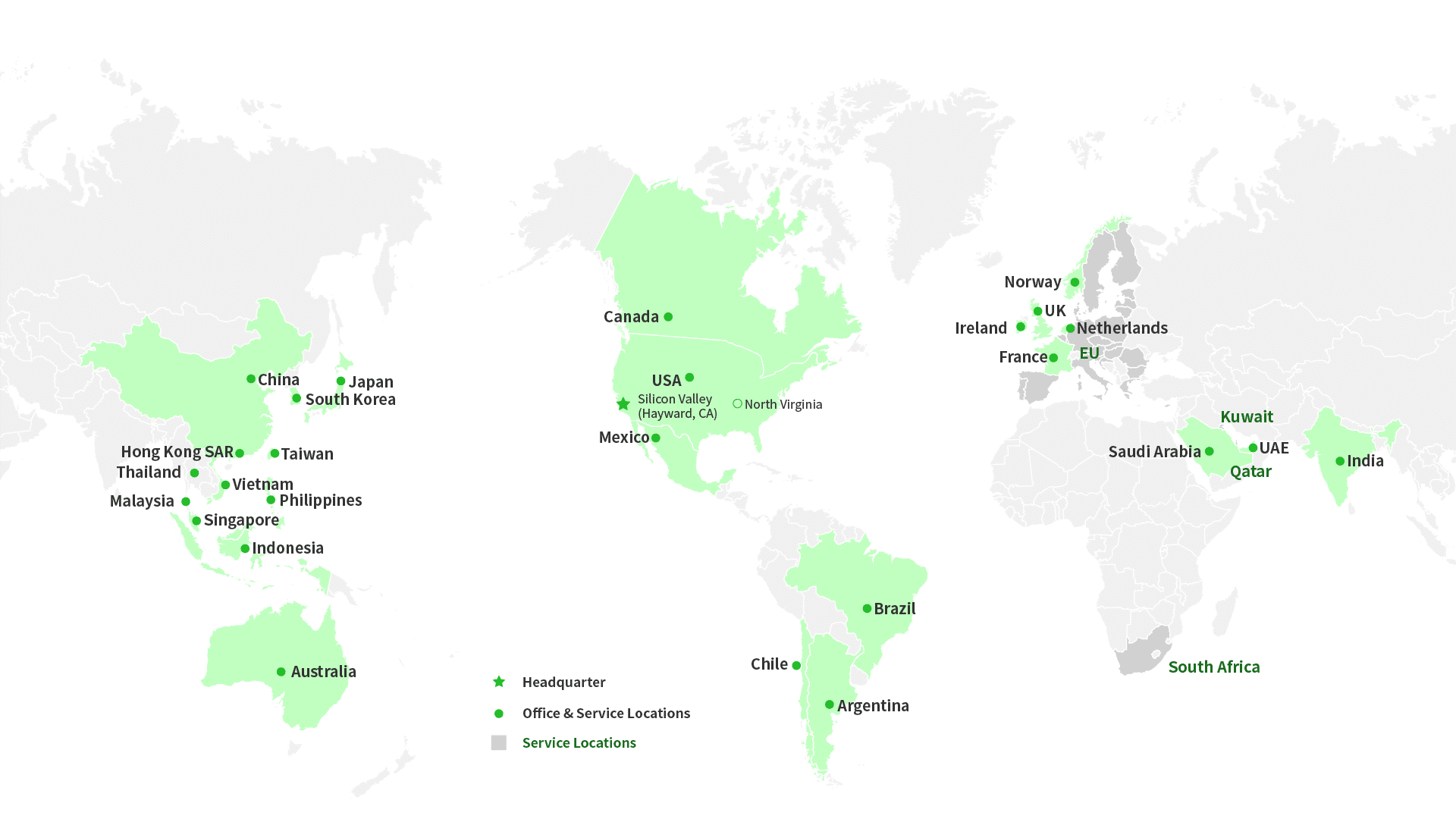

ByteBridge is a global IT solutions provider founded in Silicon Valley, US, with entities and service locations across six continents. With deep expertise in data centers and workplace solutions, we help organizations worldwide bridge technological gaps and scale with confidence.

Guided by our vision of “Bridging Visions, Shaping Futures,” our mission is to empower every organization to easily access technology globally and achieve more. With a proven track record of serving some of the world’s leading international companies, ByteBridge is a trusted partner for businesses seeking innovative IT solutions.

What We Deliver

Why ByteBridge

Global Reach

Projects delivered across 30+ countries

End-to-End Capabilities

From procurement to deployment

Certified Experts

Multi-discipline engineers & project managers

Local Presence, Global Scale

Regional teams across APAC, US & EMEA

Reliable Delivery

Proven track record with enterprise & hyperscale customers

Global Partnership

Global Coverage